When an AI suggests “optimizing” your Verilog, it’s optimizing for the wrong things entirely.

Here’s a thought experiment.

Imagine you’re a chef, and you hire a consultant to optimize your kitchen. The consultant arrives with a software engineering background. They look at your workflow and immediately start suggesting improvements: “Parallelize your prep work across more stations. Cache frequently-used ingredients closer to the cooking surface. Batch similar operations to reduce context-switching.”

These suggestions make perfect sense for software. But in a kitchen, “parallelizing” means more cooks (higher labor costs). “Caching” means more counter space (higher real estate costs). And “batching” might mean food sits longer before serving (lower quality).

The consultant isn’t wrong about optimization. They’re optimizing for the wrong objectives.

This is precisely what happens when general-purpose AI coding assistants attempt to help with RTL design. This is not because the model is unintelligent or poorly trained. It is because it has learned optimization from software, and hardware design is a fundamentally different discipline. The assumptions embedded in most AI coding assistants come from billions of lines of Python, C++, JavaScript, and similar software. Those assumptions do not just fail to transfer to RTL design. In many cases, they point in the wrong direction.

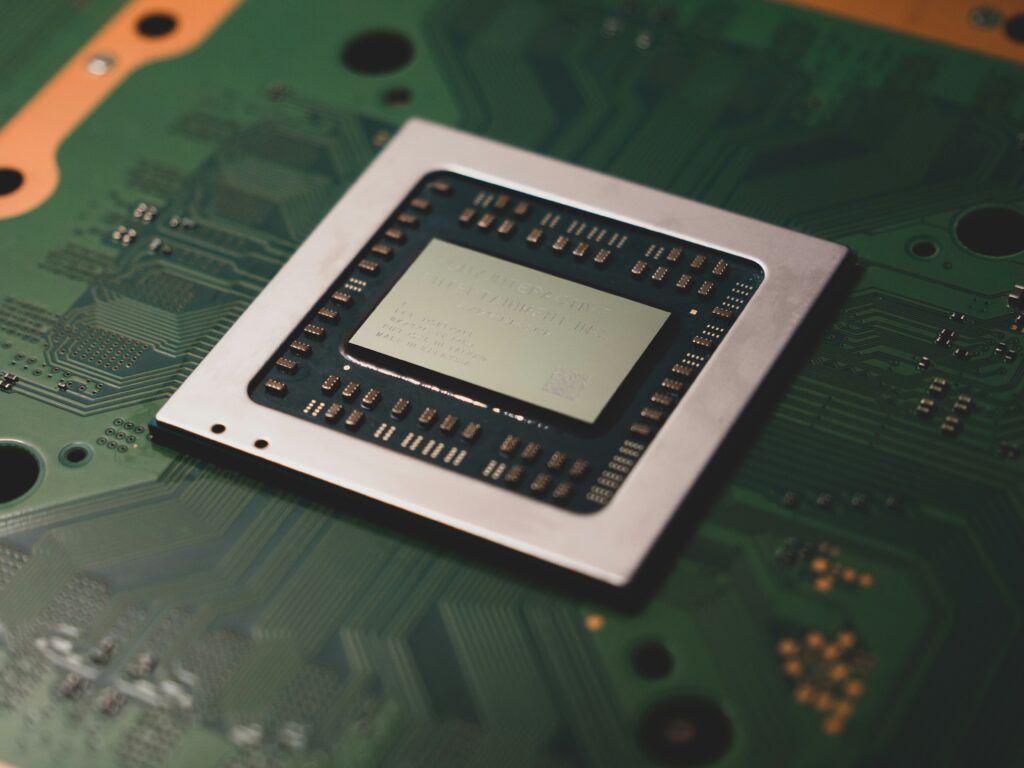

For engineers working in semiconductor design, this mismatch is becoming increasingly visible as general-purpose AI tools move into RTL workflows. The issue is not syntax generation. Modern models can write syntactically correct Verilog or SystemVerilog. The issue is that they do not yet understand what good RTL looks like in the context of silicon.

The Fundamental Divide

The key difference between software optimization and hardware optimization is often summarized in one sentence:

Software engineers optimize code to run efficiently on existing hardware. Hardware engineers optimize the hardware itself.

This distinction seems subtle, but it changes everything.

In software development, the hardware platform is fixed. A developer writes Python or C++ to run on a CPU, GPU, or cloud server. Optimization means extracting better performance from a given platform. You may change algorithms, memory layout, or concurrency patterns, but the underlying hardware remains the same.

In RTL design, the hardware is not fixed. It is being created.

Every line of Verilog eventually becomes physical transistors, wires, routing tracks, and metal layers on silicon. Decisions in RTL determine how many gates exist, how they are connected, how far signals must travel, and how much power is consumed.

In software, optimization is about using resources well. In hardware, optimization is about deciding what resources exist.

What AI Has Learned From Software

Modern AI coding assistants have absorbed optimization knowledge from massive software corpora. They have learned patterns that are almost always beneficial in software engineering:

- Reduce algorithmic complexity.

- Parallelize independent tasks.

- Use caching to improve locality.

- Add abstraction for readability.

- Scale horizontally across machines.

These principles share a common assumption: computational resources are cheap and flexible. More CPU cores, more memory, or more servers usually improve performance at manageable cost.

In software systems, the marginal cost of additional computation is close to zero. Cloud infrastructure can be scaled. Memory can be added. Latency can be traded for throughput.

This worldview is deeply embedded in AI models.

It is also incompatible with silicon design.

What Hardware Optimization Actually Means

In semiconductor design, optimization revolves around three tightly coupled constraints known as PPA: Power, Performance, and Area.

These are not abstract metrics. They are physical limits that determine whether a chip can be manufactured, whether it functions correctly, and whether it is commercially viable.

Performance in Hardware

In software, performance usually means execution speed.

In hardware, performance means timing closure. Every signal must propagate through logic and interconnect within a clock cycle. If a signal arrives late—even by picoseconds—the chip fails. It does not run slower. It fails functional verification at speed.

Hardware performance optimization involves:

- Shortening critical paths.

- Balancing pipeline stages.

- Managing setup and hold timing.

- Ensuring operation across process, voltage, and temperature corners.

When an AI assistant suggests restructuring logic for “parallelism,” it may unintentionally create longer routing paths, additional synchronization logic, or congestion that worsens timing.

Power in Hardware

Software optimization rarely considers power explicitly.

In silicon, power is central. Dynamic power increases with switching activity, capacitance, and frequency. Leakage power grows with transistor density and advanced process nodes.

Every additional gate consumes energy. Every register toggles each cycle. Excessive power leads to thermal problems, battery drain, or outright failure.

When AI suggests adding logic to improve throughput, it may be increasing power beyond acceptable limits.

Area in Hardware

In software, memory and storage are inexpensive.

In silicon, area translates directly into manufacturing cost. A larger die reduces yield, increases packaging cost, and lowers profitability. A 10 percent area increase can translate into a much larger cost increase due to yield loss.

When an AI recommends loop unrolling or logic replication, it may be multiplying area dramatically.

Tradeoffs That Software Models Do Not Understand

In software engineering, optimizations often align. Faster algorithms usually use less memory. Cleaner code improves maintainability.

In hardware, optimization is multi-objective. Improving one dimension often worsens another.

Higher frequency may increase power. Lower power may require additional area. Better timing may require more pipeline stages and increased latency.

Hardware engineers operate on a Pareto frontier, balancing tradeoffs based on product requirements. Mobile chips prioritize power efficiency. Server chips prioritize throughput. IoT devices prioritize cost and area.

General-purpose AI models have learned a simplified rule: faster is better. They have not learned the tradeoff space where “better” depends on specific constraints.

When Software Logic Fails in RTL

Consider loop unrolling. In software, loop unrolling reduces overhead and improves performance. In RTL, loop unrolling replicates hardware. Area grows linearly with the unroll factor, and power rises as multiple datapaths switch simultaneously.

Parallelization in software uses existing cores. Parallelization in RTL creates new logic blocks that must be fabricated.

Even clean-code refactoring can be harmful. Deep module hierarchies may create placement constraints and routing congestion that complicate physical design.

These examples show why software intuition can lead to inefficient silicon.

The Missing Feedback Loop

General-purpose AI models have never experienced the downstream consequences of RTL decisions.

RTL design is only the beginning of a long flow:

- RTL coding

- Synthesis

- Timing analysis

- Place and route

- Power analysis

- Signoff verification

- Silicon manufacturing

A design that simulates correctly may still fail timing, exceed power budgets, or become too large to manufacture economically.

AI models trained on code text do not see synthesis reports, timing violations, or routing congestion maps. Without this feedback loop, they cannot learn what constitutes efficient hardware.

Evidence From Early Benchmarks

Research evaluating LLM-generated RTL shows large variation even among identified “correct” designs. Functional correctness itself remains challenging. Among designs that work, area and power differences can be substantial.

In large chips, a modest area increase can translate into millions of dollars in cost difference. A power increase can push a design beyond thermal limits.

These are not theoretical concerns. They are the daily realities of semiconductor engineering.

Why This Matters Now

As AI becomes integrated into engineering workflows, the semiconductor industry faces a choice.

General-purpose AI can accelerate coding tasks, but without hardware awareness it may guide engineers toward inefficient architectures. This risk grows as more engineers rely on AI-generated suggestions without deep physical design insight.

The future of AI-assisted chip design requires tools trained on hardware-specific objectives— tools that understand PPA, timing closure, and physical constraints as first-class concepts.

The challenge is not writing Verilog. It is understanding what good Verilog means in silicon.

For teams building next-generation semiconductor tools, this gap is an opportunity. The industry needs AI that speaks the language of hardware engineering, not software optimization translated into RTL.

In the coming articles in this series, we will examine this divide more closely— starting with the difference between optimizing on hardware and optimizing the hardware, and why that distinction defines the future of AI in chip design.